Quantum computing is an emerging paradigm of computing that harnesses the strange rules of quantum physics. Instead of classical bits (which are either 0 or 1), quantum computers use quantum bits, or qubits, that can be 0 and 1 at the same time (a property called superposition). This means a handful of qubits can represent an enormous number of possible states simultaneously, enabling the machine to explore many solutions in parallel.(hstech.io)

Main Topics

Table of Contents

For example, 100 qubits could encode about 2^100 states at once – an astronomical number far beyond a classical computer’s reach.

IBM likens this to using “the operating system of the universe” (quantum mechanics) to compute, potentially solving certain problems (from cryptography to molecular design) far faster than today’s best supercomputers.

Quantum Processor

At the heart of a quantum computer is a quantum processor – often just a small chip of superconductor or other quantum hardware. This picture shows a prototype superconducting qubit chip with a tiny mechanical resonator (lower left) that was placed into a quantum superposition. In practice, qubit chips are kept inside elaborate cryogenic refrigerators (see below) to maintain their fragile quantum states.

Researchers worldwide believe quantum computing could unlock breakthroughs in fields like cryptography and drug discovery that classical machines cannot efficiently tackle.

See Also: Quantum Computing: What is a quantum Computer? 4 super features

Qubits, Superposition, Entanglement, and Interference

Quantum computing relies on several core quantum mechanics principles. The most important ones are typically described as follows:

Superposition

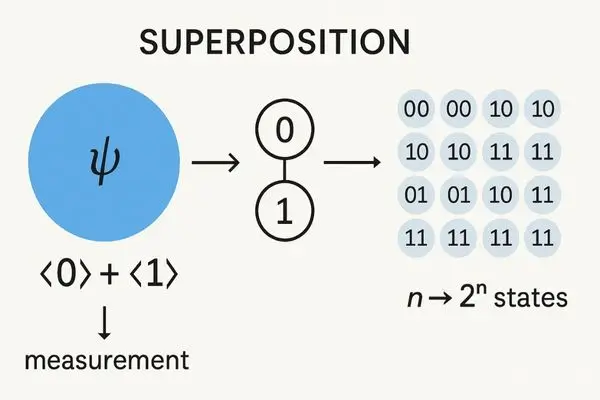

A qubit can be 0 and 1 at the same time (a superposition of states). Upon measurement, it “collapses” to either 0 or 1 with certain probabilities (ibm.com).

While classical bits have a single state, n qubits in superposition can represent all 2^n classical states at once. For example, IBM notes that as qubits are combined, their superpositions “grow exponentially in complexity” – 100 qubits yield an astronomically large state space.

Entanglement

Qubits can become entangled, meaning their states are strongly linked even when separated. Measuring one entangled qubit instantaneously influences the state of the other, no matter the distance. In practice, this lets a quantum computer coordinate many qubits as a single correlated system – a powerful resource that has no classical analog.

Interference

The probability amplitudes of qubit states behave like waves that can reinforce or cancel each other out. By carefully arranging computations (via quantum gates), a quantum computer uses interference to amplify the correct answers and cancel the wrong ones ibm.com.

IBM calls interference “the engine of quantum computing” – when amplitudes overlap, they can add up (increasing a solution’s probability) or destructively cancel (eliminating unwanted possibilities).

Quantum gates and algorithms.

Quantum gates are operations on qubits (analogous to logic gates) that manipulate superposition and create entanglement. A quantum algorithm prepares qubits in a superposition, applies gates to entangle them, and produces interference patterns.

In IBM’s description, this process “cancels out” many possible outcomes and “amplifies” the correct result, which is then measured at ibm.com. In essence, the algorithm guides the quantum waves so that only valid solutions remain at the end.

Together, these principles allow quantum computers to process information in fundamentally new ways. Notably, when qubits are measured at the end of a calculation, they yield binary answers (0 or 1) just like a classical computer, but the path to those answers leveraged the rich structure of superposition and interference.

Quantum vs Classical: How Are They Different?

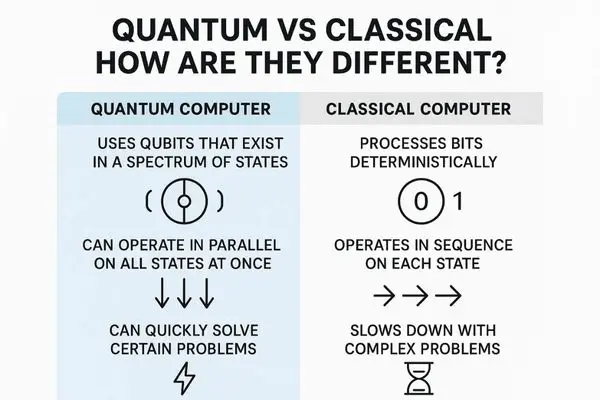

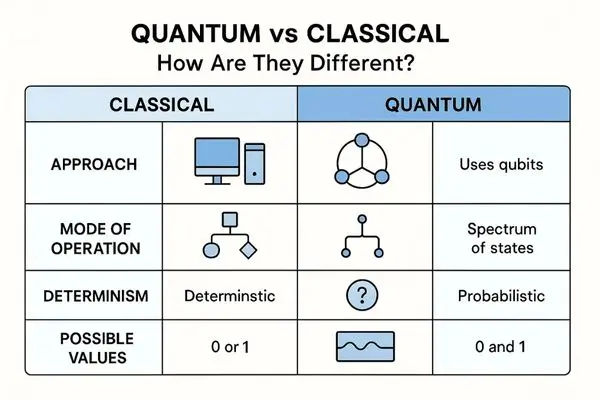

Classical and quantum computers use very different approaches. A classical computer processes bits in a step-by-step, deterministic way, whereas a quantum computer uses qubits that exist in a spectrum of states. In practical terms, this means:

- Massive parallelism: A system of n qubits inherently represents 2^n possibilities at once. For example, two qubits can encode four states simultaneously (00, 01, 10, 11), and three qubits encode eight states, etc. This exponential scaling gives quantum machines a form of built-in parallelism that is impossible for classical machines.

- Different architecture: Classical bits store data in voltage or magnetic states (0 or 1). Qubits may be realized in many physical ways – such as the spin of an electron, energy levels in an atom, or currents in a superconducting loop. For example, IBM and Google build superconducting qubit chips, companies like IonQ use trapped ions, Intel experiments with silicon qubits, and others use photons. The control electronics and refrigeration for these qubits are complex, whereas classical chips just need standard semiconductor fabrication and cooling.

- Unconventional computation model: In simple words, certain problems can be solved by quantum algorithms much faster. Think of the classic maze analogy: a classical computer might “brute force” every path one by one. A quantum computer, using superposition and interference, can effectively “feel out” the maze and eliminate many wrong paths at once, homing in on the exit with fewer operations. It’s not actually testing all paths simultaneously, but the interference of qubit states can reveal the correct path much more efficiently than blind search.

Superiority of Quantum Computers:

Because of these differences, a large-scale quantum computer could theoretically outperform classical supercomputers on specific tasks. For instance, Peter Shor’s quantum algorithm could factor large numbers exponentially faster than any known classical method, which has profound implications for encryption.

However, for ordinary computing tasks (email, word processing, etc.), quantum does not inherently help – current classical computers still handle those efficiently.

In short, quantum computers are expected to “take better advantage of quantum mechanics” for some calculations that are intractable classically (ibm.com), but they are not a drop-in replacement for classical machines in general. Do you want to know about super computers?

Real-World Applications

Although still largely experimental, researchers and big companies are exploring a wide range of real-world uses where QC could provide breakthroughs.

Key examples include:

Cryptography and cybersecurity.

Quantum algorithms threaten conventional encryption. Shor’s algorithm, running on a powerful quantum computer, could factor large integers and break RSA/ECC encryption quickly.

Today’s quantum machines are far too small for this, and experts estimate that cryptographically relevant quantum computers won’t exist until the 2030s at the earliest.

(To put numbers on it, one analysis suggests ~13 million qubits would be needed to break Bitcoin’s encryption in a day.) Because of this future threat, governments and companies are already migrating to post-quantum cryptography (new algorithms that even quantum computers can’t break). For example, US policy directs agencies to mitigate quantum risks by 2035.

Drug discovery and chemistry.

Molecules and chemical reactions are inherently quantum in nature, so simulating them is a natural fit for a quantum computer. Classical computers struggle to model large molecules exactly because the number of interacting particles grows exponentially.

Quantum computers, by contrast, could efficiently represent those quantum states.

McKinsey and others note that QC can simulate molecular energies and interactions more efficiently, potentially speeding up drug design and materials discovery. In practice, this means predicting how a new drug molecule binds to a protein or discovering a more efficient catalyst that might become much faster and more accurate with quantum simulation.

Artificial intelligence and machine learning

Quantum computing may accelerate certain AI/ML tasks. Many ML algorithms rely on optimizing complex functions or processing large vectors – tasks that quantum linear algebra algorithms (like the Harrow-Hassidim-Lloyd algorithm) could theoretically speed up. In general, as McKinsey notes, quantum computers could excel at optimization, simulation, and certain probabilistic algorithms – all of which underlie AI.

Researchers are experimenting with quantum machine learning algorithms that could, for example, speed up the training of neural networks or improve pattern recognition. (It’s still very early days: today’s quantum computers haven’t yet delivered a clear AI advantage, but this is an active area of research.)

Financial modeling and optimization

Banks and financial firms are exploring quantum algorithms for portfolio optimization, risk analysis, and pricing complex derivatives. For instance, solving a portfolio optimization problem can be framed as solving a linear system of equations.

The HHL quantum algorithm can solve certain linear systems faster, and J.P. Morgan’s research team actually implemented a hybrid HHL++ algorithm on a small trapped-ion quantum computer (10 qubits) to solve a tiny toy portfolio problem.

While today’s devices can only handle very small models, the long-term hope is that quantum computers could optimize large portfolios, simulate financial markets more accurately, or speed up Monte Carlo risk simulations that currently take days.

Climate and environment modeling.

Complex climate models involve solving massive systems of differential equations (fluid dynamics, chemical transport, etc.), which are extremely computationally intensive.

Quantum computers could, in theory, model certain aspects of climate and weather with higher resolution or speed. For example, one study notes that QC could improve fluid dynamics simulations, allowing more detailed climate forecasting and scenario planning. Quantum optimization could also help optimize logistics for climate mitigation.

Again, these are long-term prospects – but quantum researchers point out that tackling problems like climate change is a key motivation for improving simulation capabilities.

Other areas being explored include materials science (designing new alloys or battery materials) and logistics/transportation (optimizing routing and supply chains).

In general, quantum computing is seen as a potential accelerator for any domain that relies on solving hard mathematical problems (optimization, simulation, factoring, etc.).

However, it is important to note that in most of these fields, classical computers still do the heavy lifting today; quantum methods are more about future potential.

Challenges in Building Quantum Computers

Despite the promise, building practical quantum computers is extremely challenging. Some of the major hurdles are following:

1. Fragility and decoherence

Qubits are delicate quantum systems that easily lose their quantum state through interactions with the environment. Any stray heat, radiation, or even measuring them inadvertently can cause decoherence, collapsing the qubits to classical states.

To minimize this, qubit hardware must be extremely well-isolated. For example, IBM notes quantum processors operate at about 0.01 Kelvin (a few hundredths of a degree above absolute zero) to reduce thermal noise and preserve qubit coherence.

Achieving and maintaining such temperatures requires complex cryogenic refrigerators and shielding, which greatly complicates system design. Even then, every qubit has an error rate (a finite coherence time), so circuits must run quickly before decoherence sets in.

2. Error correction and scalability.

Because qubits error out so easily, large-scale quantum computers will need quantum error correction, which in turn requires many physical qubits for each logical qubit. Current devices are “noisy intermediate-scale” (NISQ) – they have only tens or hundreds of imperfect qubits with no real error correction.

Moving to a fault-tolerant quantum computer likely means needing thousands or millions of physical qubits. This is an “existential challenge” for the industry.

Leading quantum companies are already planning for this: for example, IBM has announced roadmaps aiming for early error-corrected demonstrations (hundreds of qubits in the mid-2020s) and is targeting a fully fault-tolerant processor by around 2029. However, designing the control systems, wiring, and cooling for such large qubit arrays is a massive engineering effort.

3. Hardware diversity and integration.

Many different qubit technologies exist (superconducting circuits, trapped ions, photonics, topological qubits, etc.), each with pros and cons. IBM and Google use superconducting qubits, IonQ and Honeywell use trapped ions, Intel is developing silicon spin qubits, and researchers elsewhere work on photonic or other approaches.

Each platform has unique technical challenges (e.g., ions must be trapped with lasers, superconducting qubits need dilution refrigerators). Integrating dozens or hundreds of qubits on a chip requires precision fabrication and control electronics that can scale.

For example, IBM points out that although its quantum processor chip is only about the size of a laptop chip, the entire system (cooling infrastructure and control hardware) is roughly the size of a car (ibm.com). Managing this scale-up while keeping costs and error rates under control is a key challenge.

4. Resource and engineering constraints.

Operating a high-end quantum computer may demand enormous resources. Some analyses suggest a hypothetical large-scale quantum machine could require tens or hundreds of megawatts of power – comparable to a small power plant – just to run its refrigeration and control systems.

The physical footprint, power consumption, and cooling requirements are far greater than for a conventional data center. Additionally, the industry must develop specialized hardware (microwave electronics, lasers, photon detectors, etc.) and software to program these machines, which is still an ongoing effort.

Because of these challenges, quantum advantage (a clear performance benefit for a practical problem) has not yet been achieved for general tasks. Today’s quantum devices can solve contrived examples or small instances (like Google’s 2019 “quantum supremacy” demonstration on a random sampling task), but they cannot yet outperform classical computers on real-world applications because:

- Overcoming decoherence

- Implementing robust error correction

- Scaling up hardware

are the central hurdles researchers are working to solve.

The Future of this Modern Tech

The field is advancing rapidly with significant investment and interest. Broadly speaking, the future outlook includes:

Growing investment and market forecasts.

Quantum computing is attracting increasing funding from both governments and industry.

Recent reports noted venture funding hitting record highs (on the order of $1–2 billion in 2024 alone) as startups grow rapidly. For context, one survey found 25% of large US tech firms reported investing in quantum initiatives in the past year – about triple the rate from just a year earlier.

Governments are also funding quantum R&D:

For example, China announced about $15.3 billion in quantum technology investment for 2023 (though some question how directly it’s spent) versus roughly $3.8 billion in the US. Overall, both private and public spending on quantum is surging worldwide.

Leading companies and projects.

Major tech companies and startups are racing to build better quantum hardware and software. IBM, Google, Microsoft, Intel, Amazon (with Braket), and Honeywell/Quantinuum are among the big players working on universal quantum processors. D-Wave is developing quantum annealers (a specialized type) and has announced systems with over 5,000 physical qubits for optimization problems. Startups like IonQ, Rigetti, PsiQuantum, Xanadu, Pasqal, and others are also in the mix, each pursuing different technologies.

For example, Amazon recently revealed a new chip architecture using “cat” qubits to simplify error correction, while Google has outlined a roadmap to reach a useful quantum computer in the coming years. Notably, PsiQuantum is aiming for a commercially useful machine by 2027, and Google has suggested it could deliver a capable system within roughly five years (deloitte.com.

In 2024, IBM even unveiled plans for a 4,158-qubit chip (“Kookaburra”) by 2025. These efforts illustrate how industry roadmaps are targeting the late 2020s for major milestones.

Timelines and milestones.

Predictions vary, but a common view is that we may see specialized quantum advantage within the next 5–10 years on niche tasks, and general-purpose fault-tolerant quantum computers by the 2030s. As noted above, experts estimate that cryptographically useful quantum computers (capable of breaking today’s encryption) are unlikely until the 2030s.

Meanwhile, national strategies often aim for the mid-to-late 2020s to have demonstrable prototypes and begin integrating quantum into certain R&D workflows. For example, IBM has stated goals for intermediate quantum processors (hundreds of qubits) by 2026–2028 and fully error-corrected systems by 2030. Of course, these timelines are highly uncertain – breakthroughs (or setbacks) could shift them.

Global impact and collaboration

Quantum computing has become a strategic focus globally. Countries and alliances are investing in quantum research initiatives (often alongside quantum communication and sensing).

The hope is that within a generation, quantum computers will join classical supercomputers as part of a high-tech computing landscape. In the near term, many experts emphasize collaboration: for instance, combining quantum processors with classical supercomputers (as hybrid systems) to accelerate tasks while quantum technology matures.

Encouraging adoption.

Even before fully fault-tolerant machines exist, companies and research labs are building early use cases. Some are deploying small quantum computers via the cloud for experimentation (for instance, IBM Q and Amazon Braket).

In business, a Deloitte survey found that 76% of firms investing in quantum already report seeing “significant returns” from exploratory projects. Meanwhile, the startup ecosystem is lively: new software toolkits (e.g., Qiskit, Cirq, Pennylane) and algorithm development continue apace.

In summary, the future of quantum computing looks dynamic. Analysts expect it to grow into a multi-billion-dollar industry and reshape sectors like finance, healthcare, energy, and defense over the next decade. Leading companies and governments are committed, and roadmaps are targeting key breakthroughs by 2030.

While many technical challenges remain, the rapid progress of recent years inspires optimism that practical quantum advantages will emerge, even if some targets (like fully breaking RSA encryption) are decades away.

Conclusion

Quantum computing harnesses the counterintuitive laws of quantum mechanics to process information in fundamentally new ways. It introduces concepts like qubits, superposition, and entanglement that have no parallel in classical machines.

Although still in its early stages, the field has moved from theory to experimental prototypes and clouds of real quantum processors. Real-world applications (from decrypting data to simulating molecules) are on the horizon, but only once engineers solve the daunting challenges of error correction and scaling.

As investments surge and the technology matures, the next decade should see quantum computing transition from the lab into select industries. For students, tech enthusiasts, and professionals, this means it’s a prime time to learn and explore about this futuristic technology.

In closing, quantum computing is poised to be one of the most transformative technologies of our generation. Its full impact remains to be seen, but the accelerating pace of research suggests we have only begun to tap the quantum frontier. The quantum revolution is coming – and it will be worth watching closely.

Pingback: Quantum Computing ETFs List: Unlock Investments in Tech Boom

Pingback: Best 5 Applications of Quantum Computing: Beyond the Hype